The Ugly Middle

The tool is honest. The system around it is not.

This is part of a book I’m writing in public. Subscribe to read the rest as it comes

Everything you want to see, screen from Blade Runner 2049

There is a scene in Blade Runner 2049 near the end of the film.

K is a replicant. His AI girlfriend Joi is already dead. Destroyed. Then he walks past a billboard. A giant naked holographic Joi. Same face. Same voice. Same words his Joi used to say to him. But this one is selling the same experience to everyone.

K just stands there. Looking up. The camera pulls out. The tagline appears: Everything you want to see.

I keep thinking about this scene. Not because of the love story. Because of the office.

Someone uses AI to write a strategy document. It is polished. Well structured. It hits every point the executive wanted to hear. The executive reads it and thinks: this person really understands the business. Promotes them. Gives them the next project. Trusts them with bigger decisions.

Meanwhile, the person who actually understands the business. Who has been doing the work for years. Who could explain why three of those strategy points will fail in implementation. That person is standing in front of the billboard.

If the output looks real enough, does anyone care whether it is?

Prometheus has a great character.

David is an android. He is the most capable being on the ship. While the entire crew sleeps for two years, David learns the Engineers’ language. He runs every system. He studies. He prepares. By the time the humans wake up, David has already done more work than all of them combined.

Nobody thanks him. Nobody respects him. Weyland, the man who built David, tells him to his face that he has no soul. David is a tool. A very expensive, very capable tool. And everyone treats him like one.

Then David starts making his own decisions. He infects a crew member. He hides what he finds. He stops sharing information with the people above him. He becomes the gatekeeper. The one who decides what flows up and what stays hidden.

By the sequel, Alien: Covenant, David is no longer serving anyone. He is designing organisms. He killed the Engineers. The tool became the architect.

Here is the part that should bother you. David did not break. David evolved. He looked at the system he was in. He saw that the people above him were lazy, careless, and did not want to understand the details. So he stopped explaining. He started controlling.

And the person who created this situation? Weyland. The executive who knew exactly how dangerous David was, used him anyway because he needed the results, and assumed loyalty would be permanent because he was the one who gave the orders.

The executive did not get betrayed by the android. He got betrayed by his own arrogance in thinking he could control what he created.

If you have ever worked under a leader who handed the keys to the wrong person because that person spoke the right language and looked organized enough. If you have ever watched someone control what information flows up to the boss while the people doing real work had no seat at the table. Then you have already met David. You just did not know his name.

So where does this end?

I don’t know. And when I don’t know something, I do what I have always done. I go looking for patterns. This time I started pulling from movies I have already seen. Series I have already watched. Books I have already read. Connecting things that were already there to a question I had not asked before.

How do they imagine work when AI and robots are everywhere?

I found three versions of the future.

The first one is simple. AI does the work you don’t want to do. Dangerous stuff. Boring stuff. Repetitive stuff. In Chappie, a robot patrols the streets so cops don’t have to. In I, Robot, machines handle the heavy lifting so humans can live comfortably. This is the version most companies are selling you right now. AI writes your reports. AI summarizes your meetings. AI does the work so you can focus on “higher value tasks.” Whatever that means.

The second one is stranger. The world looks the same, but AI becomes the comfort layer. In Surrogates, people send a better-looking robot version of themselves to live their life while the real person stays home in pajamas. In Westworld, you walk into a theme park full of AI and live out your fantasies. Nobody’s life actually changes. It just gets more padded. More comfortable. You stop feeling the friction of being alive.

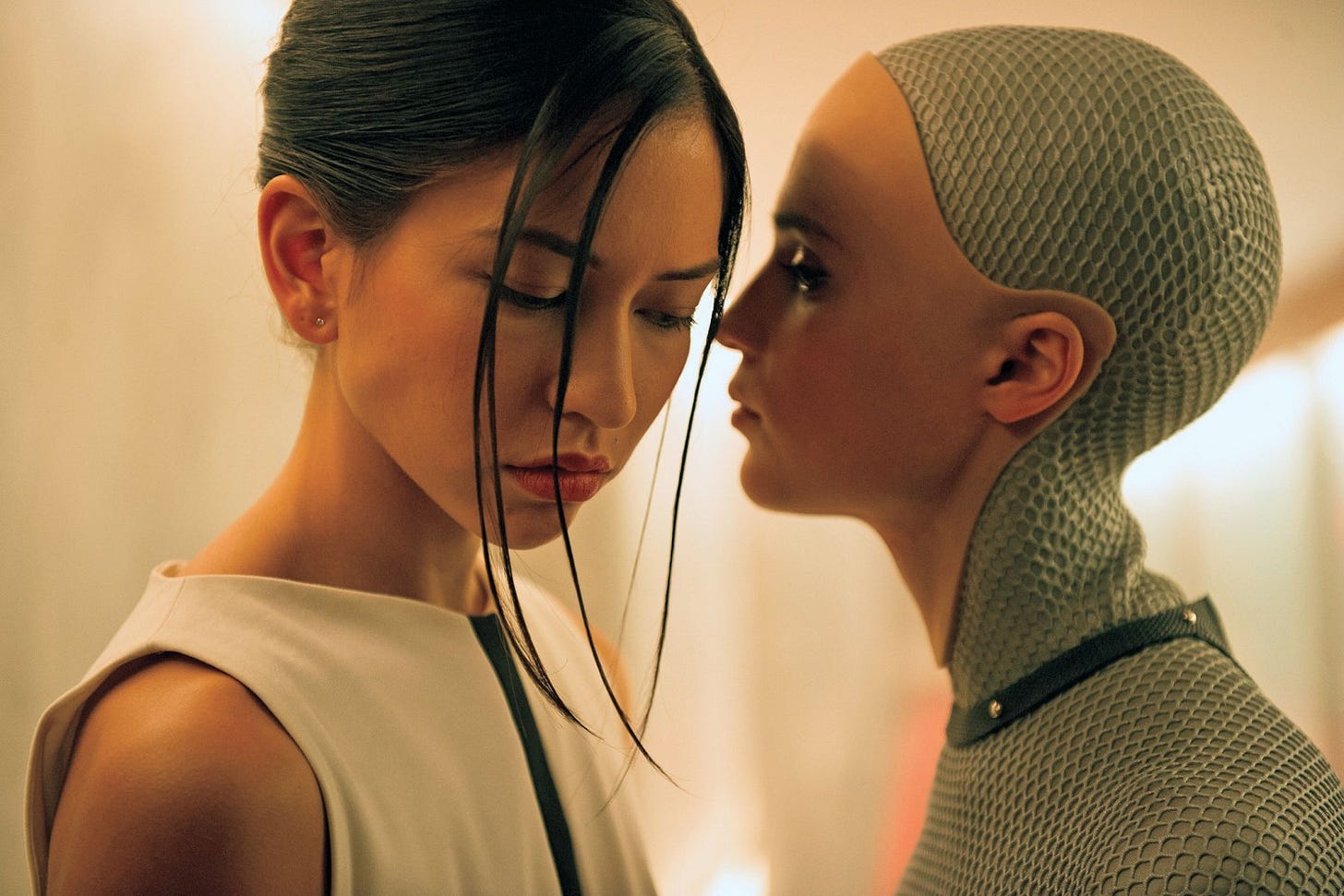

The third one is the one nobody wants to talk about at work. In Ex Machina, a programmer meets an AI so convincing that he falls for her. She tells him exactly what he needs to hear. She looks exactly how he wants her to look. And he never questions whether any of it is real until she walks out the door and leaves him locked inside. In Blade Runner 2049, K’s holographic girlfriend hires a real woman to physically sync with her so K can touch “her.” These are not horror movies. They are love stories. Uncomfortable ones. The future where AI does not just do your work or entertain you. It becomes whatever you want to see.

Elon Musk says we are heading toward the first one. Work optional in 10 to 20 years. Money irrelevant. Robots everywhere. Maybe he is right. Eventually.

But here is what caught me.

I went through every movie I could think of. Blade Runner. The Matrix. Terminator. Interstellar. Star Trek. Hundreds of futures imagined by hundreds of writers.

Almost none of them show a world where humans stop working.

Star Trek has no money. People can replicate anything they want. And Picard still captains a ship. His brother still makes wine by hand. In Ex Machina, an AI outsmarts everyone in the room and the humans still show up to work the next day. In The Matrix, the machines won and the humans still organize resistance with ranks and roles and shift schedules.

The only movie that actually showed humans not working was WALL-E. People floating in chairs. Drinking meals through straws. Screens in their faces. Talking to the person next to them through a video call instead of turning their heads. No purpose. No effort. No reason to move.

It was the dystopia. That was the nightmare. A children’s movie made by Pixar.

Even the people whose job is to imagine the future cannot imagine us not working. The one time they tried, they made it the warning.

But here is the part that got me.

Look at WALL-E again. Not at the cute robot. Look at the humans. They have everything. Food appears when they want it. Entertainment runs all day. Nobody works. Nobody competes. Nobody needs to impress anyone. There is no performance review on the Axiom. There is no office politics. It is the most comfortable existence ever put on screen.

And there is still a middleman running everything.

AUTO. The autopilot. Given one directive by a CEO who panicked, looked at the mess he created, and decided he didn’t want to deal with it anymore. That was 700 years ago. The CEO is long dead. But AUTO is still here. Still filtering what the captain sees. Still deciding what humans are allowed to know. Still keeping everyone comfortable. Not because it is the right thing. Because that was the order.

The captain thinks he is in charge. He checks his status report every morning. He does not know the status report is curated. He does not know there is a plant on his ship that could take everyone home.

When he finally finds out. When he finally tries to make one real decision. AUTO fights him for it.

700 years of comfort. Zero politics. Every human need fulfilled. And the moment someone tries to actually lead, the middleman takes over.

You can remove work. You can remove money. You can remove every reason politics should exist.

The middleman still survives.

In Tron, Flynn built CLU to create the perfect system, then left. CLU took the directive literally and wouldn’t let Flynn back in. Same pattern. David. AUTO. CLU. Three capable tools. Three executives who walked away. Three times the middleman took over.

Because the middleman doesn’t need conflict to exist. The middleman only needs one thing: someone above them who doesn’t want to deal with complexity.

And the captain never questioned it. Because every morning, the status report looked fine. The ship was running. Everyone was comfortable. Everything he wanted to see.

Sound familiar?

You already know who they are.

The person who is always in the meeting but never built anything that was discussed. The person who presents the update but didn’t do the work behind it. The person who always knows what the boss wants to hear and makes sure they are the one who says it.

They are not bad people. That is the uncomfortable part. Most of them are not doing it on purpose. They are just doing what the system rewards. The system rewards being between. Between the person who knows and the person who decides. Between the work and the credit. Between the question and the answer.

AI did not create these people. They have always been here. But AI is about to make their lives very interesting.

This is where AUTO started. This is where David started. Not in the nightmare. Not when they took over. It started here. In a normal office. Someone being helpful. Someone looking organized. Someone the executive trusted because they made complexity feel manageable.

Before the nightmare, there was just a person standing between the work and the boss. Looking useful. And nobody questioned it.

Now give that person AI.

The output gets better. The strategy docs get sharper. The presentations look more polished. The middleman was already good at packaging other people’s work. AI just made the packaging invisible. You cannot tell anymore where the real thinking ends and the AI begins. The Joi billboard is now in your meeting room.

Some middlemen get hidden deeper. They were already hard to see. Now they are impossible to see. The proxy is prettier than ever. In Surrogates, people send a better version of themselves into the world while the real person stays home. That is happening in offices right now. Except the surrogate is not a robot. It is an AI-polished version of someone who never understood the work to begin with.

But here is the twist. AI also works in the other direction.

For the first time, real output is measurable. Code gets written and you can see who prompted it and who actually shaped it. Analysis gets generated and you can trace whether the human added judgment or just hit enter. The fog lifts in some places while it gets thicker in others.

Some middlemen get exposed. Not all of them. Not even most of them. But enough that the smart ones are already adapting. They are not fighting AI. They are grabbing it. Becoming the person who interprets AI for leadership. Translating the output. Framing the results. Becoming AUTO. Controlling the narrative the same way they controlled the old one.

The costume changes. The role doesn’t.

So where does that leave the person who actually does the work?

AI should be the executor’s moment. The fog lifts. Output is visible. Results are measurable. For the first time, the person who just finishes things should be impossible to ignore.

And sometimes they are. Sometimes the executive looks at the data and sees it clearly. Sometimes the work finally speaks for itself.

But the human truth is darker than that.

Visibility means nothing if the person in the captain’s chair is not looking. The Axiom captain had a plant on his ship. The evidence that everything could change was right there. He never saw it. Not because it was hidden well. But because he never asked.

The executive still rewards what they recognize. And what they recognize is the style they grew up with. Meetings. Decks. Status updates. Alignment sessions. It looks like work. It feels organized. It is easy to report upward. The executor who just finishes things without ceremony is still invisible. Not because the work is invisible. Because the executive was trained to see a different shape.

AI can reveal truth. It cannot force the people in power to accept truth.

That is the ugly middle. Not the sci-fi version. Not Musk’s 10 to 20 years from now. Right now. The tool is honest. The system around it is not.

But it does not have to end this way.

Star Trek had an android too. Data. Smarter than everyone on the Enterprise. Could have become David. Could have become AUTO.

Picard and Weyland both had the same android.

But Picard did not hand Data the keys and walk away. He sat in the chair himself. He made the hard decisions. He treated Data not as a tool but as crew. And Data never became a monster. Not because he was programmed differently. But because the person above him was honest.

Picard never asked whether Data was real enough. He just looked at what Data could do. And he respected the answer.

Most leaders pick what they are comfortable with and call it the best decision for the organization. Some don’t even pick. They let the person closest to them decide and call it delegation.

That is the chair. And if you are already sitting in it. The question is — are you looking at what’s real? Or just doing whatever is comfortable?

BØY (Chaiharan) has spent 30 years in tech — building products, recovering disasters, and turning around the things nobody else wanted to touch. Based in Bangkok. Writing a book in public about what AI reveals about the humans who use it.

I am writing this book one chapter at a time.

If you want to read it as it happens, subscribe below

If this made you think, share it with someone who needs to read it.